RAG Development Services That Ground Every AI Answer in Your Verified Data

- 70 + AI Projects Delivered

- 8 + Years Pure AI

- NVIDIA Certified AI Architect

- ISO 27001 Certified

- NVIDIA Inception Partner

- Upwork Top Rated Plus

RAG Development Services That Ground Every AI Answer in Your Verified Data

- 70 + AI Projects Delivered

- 8 + Years Pure AI

- NVIDIA Certified AI Architect

- ISO 27001 Certified

- NVIDIA Inception Partner

- Upwork Top Rated Plus

Trusted by teams across USA, Europe & Asia

- The Enterprise AI Shift

Why RAG Is the Foundation of Enterprise AI in 2026

The real challenges are not in model selection or prompting — they are in document ingestion pipelines that handle 47 formats across 12 systems, chunking strategies that preserve meaning across tables and multi-page sections, embedding models that capture domain-specific semantics your general-purpose embeddings miss, hybrid retrieval that combines dense vector search with sparse keyword search for optimal precision, and knowledge base governance that keeps stale, conflicting, or unauthorized content from corrupting your AI’s answers.

This is exactly where Brainy Neurals operates. We are not a prompt engineering boutique that wraps LangChain around a vector database. We are a RAG consulting and development company that engineers the complete retrieval infrastructure — from document ingestion to vector indexing to retrieval optimization to LLM integration to source citation to production monitoring — for enterprises that cannot afford their AI to be wrong.

- RAG Patterns

RAG Architecture Patterns We Build

Not all RAG is created equal. The right architecture depends on your data types, query patterns, accuracy requirements, and regulatory constraints. We build five distinct RAG patterns — and most enterprise deployments use a combination:

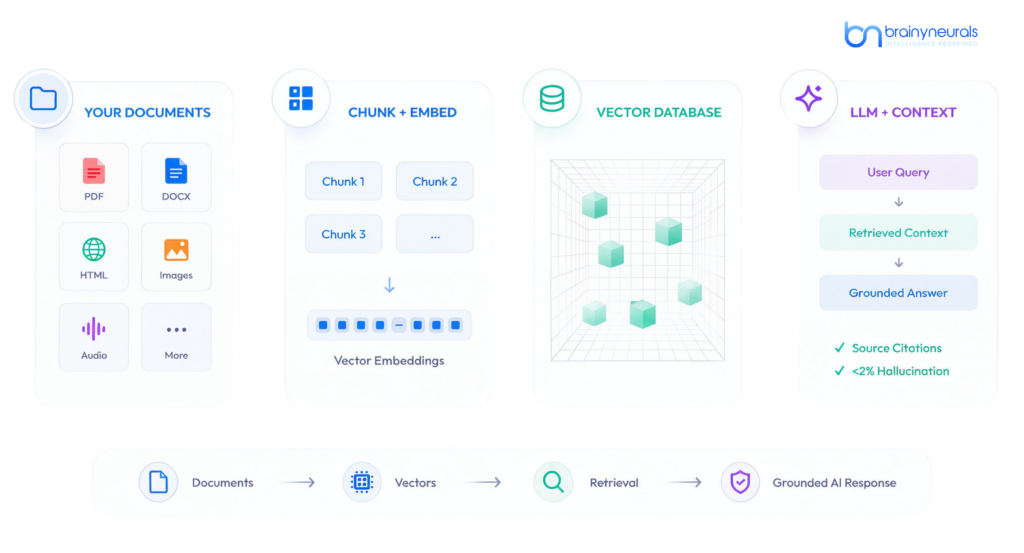

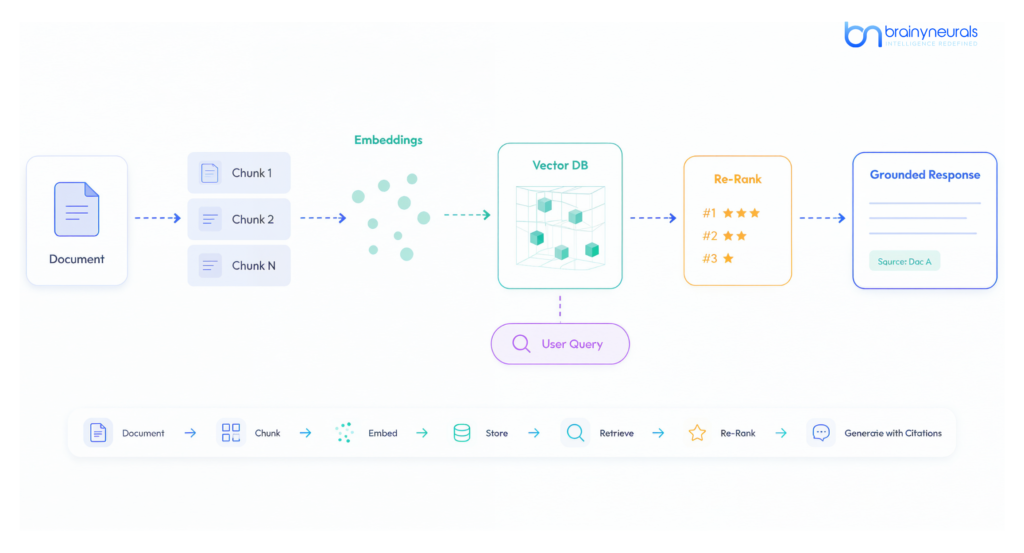

Standard RAG (Vector Search + LLM Generation)

The foundational RAG pattern: documents are chunked, embedded into vectors, stored in a vector database, and retrieved via semantic similarity search when a user asks a question. The retrieved chunks are injected into the LLM’s prompt as context, and the model generates a response grounded in the retrieved information with source citations.

We build standard RAG with production-grade engineering: intelligent chunking strategies (not fixed-size — we use semantic chunking that preserves paragraph boundaries, table structures, and heading hierarchies), domain-tuned embedding models (general-purpose embeddings like OpenAI text-embedding-3 miss domain-specific semantic relationships that custom or fine-tuned embeddings capture), hybrid retrieval combining dense vector search with sparse BM25 keyword matching (catching exact terms and acronyms that embedding similarity misses), re-ranking with cross-encoder models that score retrieved chunks for relevance before they enter the LLM prompt, and metadata filtering that restricts retrieval to documents the user is authorized to access (critical for multi-tenant enterprise deployments).

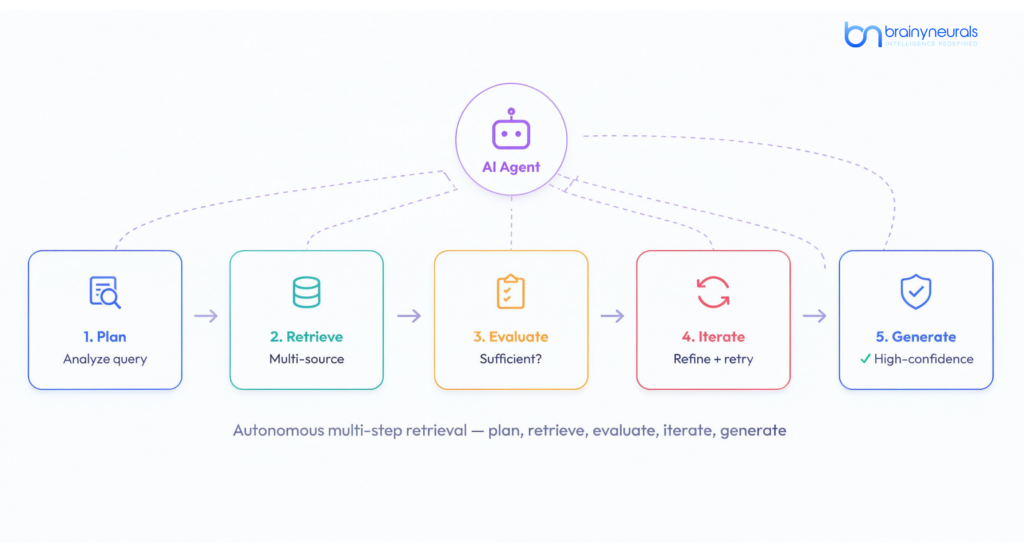

Agentic RAG (Autonomous Multi-Step Retrieval)

Standard RAG retrieves once and generates. Agentic RAG development takes a fundamentally different approach: an AI agent evaluates the query, plans a retrieval strategy, executes multiple retrieval steps across different knowledge sources, evaluates whether the retrieved information is sufficient, and iterates until it has enough context to generate a high-confidence answer. The agent can reformulate queries when initial retrieval returns irrelevant results, chain multiple retrieval calls to gather information from different document collections, validate retrieved content against known constraints before generating, escalate to human review when confidence remains below threshold after maximum retrieval attempts, and call external APIs to supplement knowledge base content with real-time data.

Agentic RAG is essential for complex enterprise queries that span multiple knowledge domains — for example, a compliance question that requires retrieving the relevant regulation, the company’s internal policy interpretation, the latest legal counsel opinion, and the precedent from a similar past case. No single retrieval call answers this question. An agentic system orchestrates the multi-step investigation automatically. We build agentic RAG using LangGraph, CrewAI, and custom agent orchestration frameworks with explicit reasoning traces for auditability.

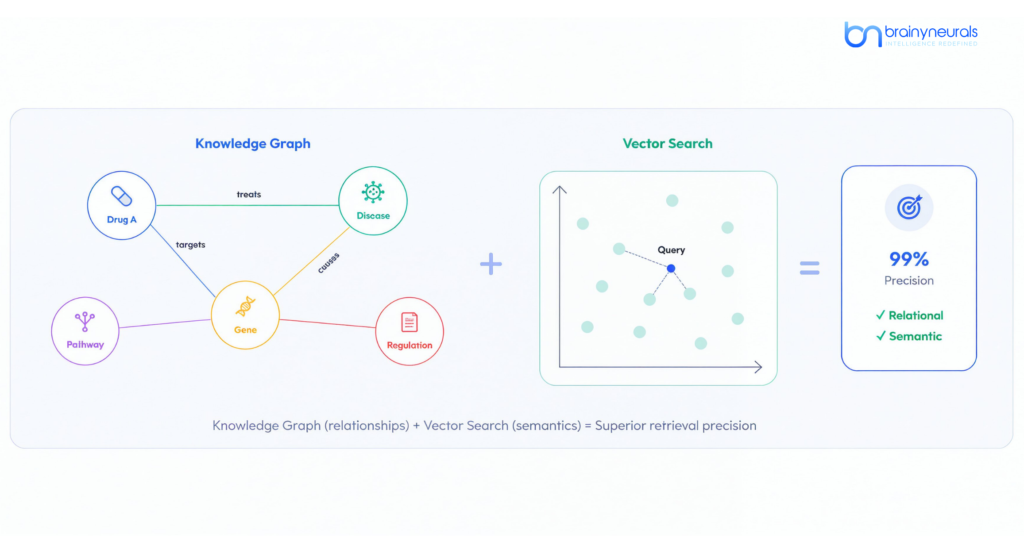

Graph RAG (Knowledge Graph-Enhanced Retrieval)

Graph RAG development combines vector search with structured knowledge graphs to bring relational reasoning into the retrieval process. While vector databases excel at finding semantically similar text, they do not understand relationships between entities — that a specific regulation applies to a specific product category sold in a specific jurisdiction, or that a patient’s medication was prescribed by a specific physician for a specific diagnosis with a specific contraindication history. Knowledge graphs encode these relationships explicitly, enabling retrieval that follows logical connections rather than just semantic similarity.

We build graph RAG systems using Neo4j, Amazon Neptune, and custom graph databases integrated with vector search layers. The knowledge graph provides structured relationship traversal (navigating from entity to entity through typed relationships), while the vector database provides semantic similarity search across unstructured text. The combination achieves retrieval precision that vector search alone cannot match — with published benchmarks showing up to 99% precision on domain-specific queries when the knowledge graph is properly curated. Graph RAG is particularly valuable for pharmaceutical companies (drug-gene-disease-pathway relationships), financial services (entity-transaction-regulation-jurisdiction relationships), and legal applications (case-statute-precedent-jurisdiction relationships).

Multimodal RAG (Text + Image + Table + Audio Retrieval)

Enterprise knowledge is not text-only. Engineering drawings contain critical dimensions in visual annotations. Financial reports have data locked in tables and charts. Medical records include diagnostic images alongside clinical notes. Training manuals combine text instructions with annotated photographs. Standard text-only RAG misses this visual and structured information entirely. Multimodal RAG development builds retrieval systems that understand and search across text, images, tables, diagrams, and audio transcripts within a unified index.

We build multimodal RAG systems that extract and index text from documents, images from documents (with visual embeddings that capture diagrammatic content), tables as structured data (preserving row-column relationships, not flattening to text), audio transcripts from meeting recordings and call center logs, and cross-modal relationships (linking a table in a financial report to the explanatory text that references it). When a user asks “What was the pressure rating shown in the engineering drawing for valve assembly V-2847?” a multimodal RAG system retrieves the relevant drawing, identifies the annotation containing the pressure rating, and returns the answer with the source image as evidence — something text-only RAG fundamentally cannot do.

RAG for Regulated Industries (Banking, Healthcare, Legal)

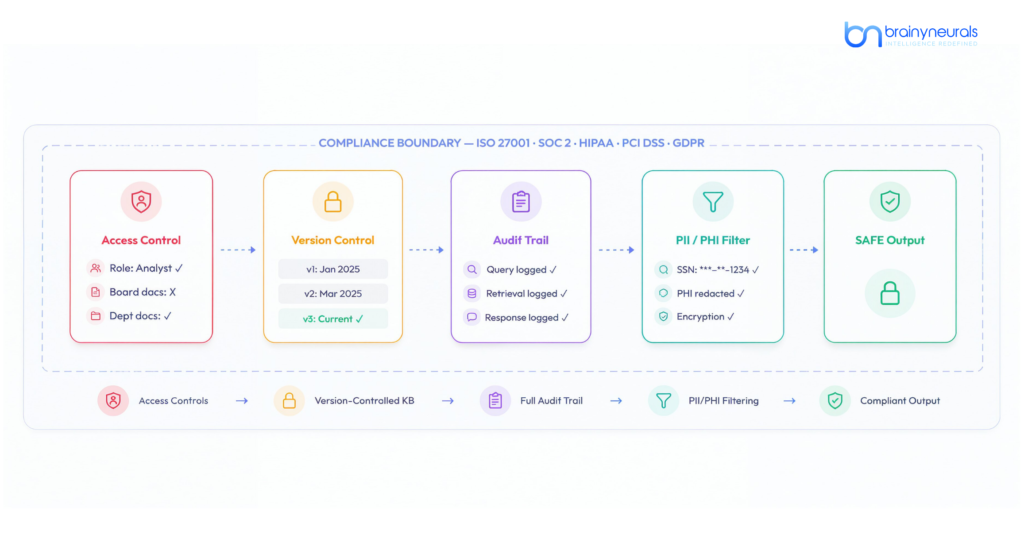

RAG for banking and finance requires architectural features that general-purpose RAG tutorials never address: document-level access controls ensuring that retrieved content respects the user’s authorization level (a junior analyst should not receive context from board-level strategy documents even if they are semantically relevant to the query), complete audit trails logging every retrieval event — which documents were retrieved, which chunks were injected into the prompt, what the LLM generated, and what the user saw — for regulatory examination, version-controlled knowledge bases where regulatory updates are incorporated with effective dates (the system must answer “What was the policy on X as of March 15?” not just “What is the current policy on X?”), and data residency controls ensuring that embeddings and source documents remain within specified geographic boundaries.

RAG for healthcare adds HIPAA-compliant architecture: PHI detection in retrieved content with automatic redaction before display to unauthorized users, BAA-ready deployment on HIPAA-compliant infrastructure, clinical vocabulary understanding (SNOMED CT, ICD-10, CPT codes, drug names, dosage forms) in both the embedding model and retrieval logic, and integration with EHR systems (Epic, Cerner) through HL7 FHIR interfaces. Every regulated-industry RAG system we build is designed for ISO 27001, SOC 2, HIPAA, PCI DSS, or GDPR compliance from the architecture level — with Brainy Neurals’ ISO 27001 certification providing verified information security management standards.

- RAG Patterns

How We Select the Right Vector Database for Your RAG System

Vector database selection is one of the most consequential architecture decisions in any RAG deployment. The market has exploded from $1.73 billion in 2024 to a projected $10.6 billion by 2032. Here is our honest assessment:

Pinecone

Strengths

Fully managed, zero infrastructure. Fastest time-to-production. SOC 2 compliant. Multi-cloud.

Limitations

No self-hosting option. Cost scales with vector count. Less control over indexing.

Best For

Teams wanting fastest deployment without infrastructure management. Startups and mid-market.

Weaviate

Strengths

Native hybrid search (vector + keyword in one query). Built-in modules for embedding generation. Open-source option.

Limitations

Steeper learning curve than Pinecone. Self-hosted requires DevOps expertise.

Best For

Teams needing hybrid search out of the box. Applications where BM25 + vector combination is critical.

Qdrant

Strengths

Best price-performance ratio for self-hosted. Written in Rust for performance. Advanced filtering.

Limitations

Smaller ecosystem than Pinecone/Weaviate. Fewer pre-built integrations.

Best For

Cost-sensitive deployments needing high performance. Teams comfortable with self-hosting.

Milvus

Strengths

Highest scale — handles billions of vectors. Distributed architecture. GPU-accelerated search.

Limitations

Complex to operate. Requires dedicated infrastructure team for production deployments.

Best For

Enterprise-scale deployments with massive vector counts (100M+). Organizations with dedicated platform teams.

pgvector

Strengths

PostgreSQL extension — use your existing database. Zero additional infrastructure.

Limitations

Performance degrades above ~1M vectors. Limited to PostgreSQL ecosystem.

Best For

Small-to-medium RAG deployments where teams already use PostgreSQL and want to minimize infrastructure.

This vector database comparison is something no competitor RAG services page publishes — because most vendors have a default recommendation regardless of client requirements. We are database-agnostic. We evaluate your scale requirements, query patterns, infrastructure preferences, compliance needs, and budget constraints to recommend the right database — or combination of databases — for your specific deployment.

- Technology

Our RAG Technology Stack

Technically convinced? Book a free 30-minute RAG architecture assessment — we'll evaluate your documents, query patterns, and optimal retrieval strategy.

- Industries

Industries Where Our RAG Solutions Deliver ROI

Strongest Domain

RAG for banking powers the most document-intensive workflows in financial services: compliance assistants that answer regulatory questions with source citations from your policy library, KYC document analysis systems that cross-reference customer submissions against multiple verification databases, loan underwriting support that retrieves relevant guidelines, precedents, and risk factors, AML investigation tools that connect transaction patterns with regulatory alerts and case histories, and wealth management research assistants that synthesize market data, analyst reports, and client portfolio context. Every banking RAG system includes SOC 2-compliant audit trails, document-level access controls, and version-controlled knowledge bases with effective-date awareness.

RAG for healthcare enables AI that is both knowledgeable and HIPAA-compliant: clinical decision support systems that retrieve relevant clinical guidelines, drug interactions, and treatment protocols grounded in evidence-based sources, patient education assistants that generate accurate health information from verified medical literature, pharmaceutical regulatory assistants that retrieve relevant FDA guidance, ICH guidelines, and submission precedents, and clinical trial knowledge bases that help research teams search across protocols, amendments, and regulatory correspondence. Healthcare RAG architecture includes PHI detection, automatic de-identification, BAA-ready deployment, and EHR integration through HL7 FHIR.

Legal & Professional Services

Legal RAG transforms how law firms and corporate legal teams access knowledge: contract Q&A systems that answer questions about specific clauses across thousands of agreements, legal research assistants that retrieve relevant case law, statutes, and regulatory guidance, matter management knowledge bases that connect current work to historical precedents within the firm, and compliance monitoring systems that track regulatory changes and automatically flag impacts on existing contracts and policies.

Enterprise RAG for manufacturing and operations: maintenance knowledge assistants that help technicians troubleshoot equipment by retrieving relevant sections from manuals, maintenance histories, and known-issue databases, quality investigation tools that connect defect reports with material specifications, process parameters, and supplier quality data, and enterprise search systems that replace keyword search across SharePoint, Confluence, Salesforce, and 15 other knowledge repositories with a single natural language interface that understands what you mean, not just what you type.

- Delivered Results

RAG Projects We Have Delivered

Financial Services

RAG-Powered Compliance Assistant — 50,000+ Documents Monthly

Manual research

97%

Retrieval accuracy

Healthcare

HIPAA-Compliant Clinical Knowledge Base — 15 Min → 30 Seconds

HIPAA-compliant RAG system for a healthcare organization. Clinicians query clinical guidelines, drug information, and treatment protocols using natural language. System retrieves evidence-based content from curated medical literature with SNOMED CT and ICD-10 entity linking. Integrated with Epic EHR through HL7 FHIR for patient-context-aware retrieval.

15 min manual search

30s

AI-assisted retrieval

Enterprise

Multi-Source Knowledge Search — 12 Repositories, 8,000+ Users

Enterprise RAG system replacing keyword search across 12 internal knowledge repositories (SharePoint, Confluence, Salesforce Knowledge, internal wikis, PDF document libraries, archived email) for a mid-market technology company. 8,000+ employees query all organizational knowledge through a single natural language interface. 2,000+ queries daily with sub-3-second response times.

25 min avg

<1 min

Time to answer

Legal

Contract Intelligence System — 15,000+ Contracts Queried via Natural Language

RAG system enabling a corporate legal team to query 15,000+ contracts using natural language: “Which vendor agreements have liability caps below $500K?” System extracts clauses, maps them to a structured taxonomy, and stores both vector embeddings and structured metadata in a hybrid index. Agentic RAG pattern enables multi-step queries spanning multiple contract types.

Manual review

15K+

Contracts searchable

Technically convinced? Book a free 30-minute RAG architecture assessment — we'll evaluate your documents, query patterns, and optimal retrieval strategy.

- Our Process

How We Deliver RAG Projects

Every RAG development engagement follows our production-proven methodology — designed to get you from documents to deployed enterprise RAG solution in the shortest path with the lowest risk. Our RAG pipeline development process has been refined across dozens of production deployments.

Ongoing: Knowledge Base Maintenance & Optimization

Automated sync pipelines keep the knowledge base current as source documents change. Monthly retrieval quality audits. User feedback loops surface knowledge gaps. Embedding and LLM upgrades when newer models offer measurable improvement. Your RAG system delivers better answers in month 12 than in month 1.

- Honest Comparison

DIY RAG vs. RAG Platform vs. Brainy Neurals

- Why Us

Why Enterprise Teams Choose Brainy Neurals for RAG

RAG Is an Architecture Problem, Not a Library Problem

Any developer can pip install langchain and build a RAG demo in an afternoon. Making that demo work reliably at enterprise scale — with 50,000 documents, 47 formats, multi-tenant access controls, sub-3-second latency, and compliance audit trails — is an engineering challenge that requires production AI experience. Brainy Neurals has been building production AI systems since 2018 across 70+ projects. We understand the failure modes that tutorials do not cover: embedding drift, retrieval degradation, context window overflow, and the “needle in a haystack” problem.

Five RAG Patterns, Not One

Most RAG development companies build standard vector-search-plus-LLM pipelines. As a specialized RAG development company, Brainy Neurals goes further. We build five distinct patterns: standard RAG for straightforward Q&A, agentic RAG for complex multi-step reasoning, graph RAG for relationship-rich domains, multimodal RAG for visual and tabular content, and regulated-industry RAG for banking, healthcare, and legal compliance requirements. We select and combine patterns based on your actual query complexity and data characteristics — not based on what we built for the last client.

NVIDIA Certified AI Architect — Founder-Led RAG Engineering

Brainy Neurals is founded and led by Mitesh Patel, an NVIDIA Certified AI Architect with 8+ years of production AI experience. Mitesh’s individual Upwork Top Rated Plus profile provides third-party verification of delivery excellence. Our NVIDIA Inception partnership, AWS Activate membership, and Microsoft for Startups participation validate our engineering capabilities across all three major AI platforms. We deploy RAG systems on AWS Bedrock, Azure OpenAI Service, GCP Vertex AI, or self-hosted infrastructure.

ISO 27001 + Compliance-First RAG Architecture

RAG systems access your most sensitive enterprise knowledge — policy documents, financial records, medical guidelines, legal opinions. Our ISO 27001 certification ensures information security management meets international standards. Every RAG system we build includes document-level access controls, retrieval audit logging, PII detection, data encryption, and compliance-ready deployment architecture. We design for SOC 2, HIPAA, PCI DSS, and GDPR from the first line of code.

US Market Credibility

Leadership team with direct experience at leading U.S. consumer brands and enterprise retailers. We operate during EST and GMT business hours with daily standups, weekly demos, under 4-hour response times, and full IP ownership on every project—zero lock-in, zero vendor dependency.

Download: Enterprise RAG Architecture Decision Guide

- FAQ

Frequently Asked Questions About RAG Development

What are RAG development services?

RAG development services build enterprise retrieval-augmented generation systems that connect large language models to your proprietary data sources. Instead of relying on an LLM’s training data (which leads to hallucination on domain-specific questions), RAG retrieves verified information from your knowledge bases, document repositories, and databases at query time, then generates accurate, citation-backed answers grounded in your actual data. RAG development services from Brainy Neurals include knowledge base AI development, vector database development and optimization, document ingestion pipelines, retrieval strategy engineering, LLM integration, guardrails implementation, and production monitoring — delivered as a complete, production-ready system that you own. Our RAG consulting services also cover architecture evaluation and technology selection for teams that need expert guidance before committing to implementation.

What is the difference between standard RAG, agentic RAG, and graph RAG?

Standard RAG retrieves documents via vector similarity search and generates a response in a single pass. Agentic RAG uses an AI agent that plans multi-step retrieval strategies, evaluates results, and iterates until it has sufficient context — essential for complex queries spanning multiple knowledge domains. Graph RAG combines vector search with knowledge graphs that encode entity relationships, enabling retrieval that follows logical connections (regulation-applies-to-product-in-jurisdiction) rather than just semantic similarity. Most enterprise deployments use a combination of patterns. Brainy Neurals evaluates your query complexity and data characteristics to recommend the optimal architecture.

Which vector database should I use for my RAG system?

The right vector database depends on your scale, infrastructure preferences, and compliance requirements. Pinecone offers the fastest managed deployment with SOC 2 compliance. Weaviate provides native hybrid search combining vectors and keywords. Qdrant delivers the best price-performance for self-hosted deployments. Milvus handles the highest scale (billions of vectors). pgvector works within existing PostgreSQL infrastructure for smaller deployments. Brainy Neurals is database-agnostic — we evaluate your requirements and recommend the right choice, including hybrid approaches using multiple databases for different retrieval tiers.

How do you prevent hallucination in RAG systems?

We prevent hallucination through architectural layers: RAG grounding ensures every response is based on retrieved, verified documents. Confidence scoring routes low-confidence responses to human review. Source citation requirements force the model to reference specific documents for every claim. Output validation checks generated responses against retrieved content for factual consistency. Guardrails block responses that contain claims not supported by retrieved context. In our production RAG deployments, these layers reduce hallucination rates to below 2% on domain-specific queries — compared to 15-25% hallucination rates for ungrounded LLMs.

Can you build RAG for regulated industries like banking and healthcare?

Yes. We specialize in RAG for banking and finance (SOC 2, PCI DSS, GDPR compliant, with document-level access controls and version-controlled knowledge bases) and RAG for healthcare (HIPAA compliant with PHI detection, automatic de-identification, and EHR integration through HL7 FHIR). Brainy Neurals is ISO 27001 certified, providing verified information security management standards. Our NVIDIA Inception partnership, AWS Activate membership, and Microsoft for Startups participation validate our platform-level security capabilities.

- Explore More

Related Services & Pages

Generative AI Development

Document AI & IDP

Document AI pipelines feed extracted, structured data into RAG knowledge bases.

AI Agent & Copilot Development

Agentic RAG powers autonomous agents that reason through multi-step retrieval tasks.

AI Consulting & Strategy

Not sure if RAG is the right approach? Our consulting team evaluates architecture options.

AI in Banking & Finance

RAG for compliance, KYC research, and regulatory guidance in financial services.

AI in Healthcare

HIPAA-compliant RAG for clinical decision support and pharmaceutical knowledge management.

AI POC & Pilot Development

Validate your RAG concept in 4-6 weeks with a working prototype on your actual documents.

- Let’s Build AI for Your Everyday Challenges

Among the Top 3% of Global AI Professionals.

- 50+

AI SYSTEMS IN PRODUCTION - 9+

YEARS IN PRODUCTION AI

Led by an NVIDIA Certified AI Architect. Backed by AWS, Microsoft & NVIDIA ecosystems. ISO 27001 certified for enterprise-grade security.

Every call is a free technical assessment — not a sales pitch.

- We respond within 24 hours

Or email: hello@brainyneurals.com