The global AI in textile manufacturing market is projected to grow from $4.12 billion in 2025 to $68.44 billion by 2035, a 32.45% compound annual growth rate.

The textile industry is growing steadily, with the market valued at around USD 1.16 billion in 2025 and expected to reach USD 1.61 billion by 2033, at a CAGR of 4.2%. This growth is mainly driven by the rise of fast fashion, increasing urbanization, and higher disposable incomes in emerging economies.

That number sounds impressive in a pitch deck. But on a factory floor in Dhaka, Karachi, or Guangzhou, it means nothing until it solves a specific problem: the roll of denim that just failed brand QC for the third time this month, or the digital print run rejected because tiling artifacts showed up on leg panels, or the 23% of fabric defects your manual inspectors missed last quarter.

AI in textile production is not one technology. It is a stack of distinct engineering capabilities including computer vision for defect detection, signal processing for weave analysis, statistical modeling for texture synthesis, and computational geometry for garment panel mapping. Each of these solves a different problem in the production chain. The companies deploying textile AI successfully are not the ones chasing the broadest models. They are the ones matching the right AI technique to the right manufacturing bottleneck.

This is a practical breakdown of how AI is being applied in your textile manufacturing processes, what works on the factory floor, what falls short, and what it really takes to move from a proof of concept to a production ready system.

Where AI Creates Measurable Value in Textile Production

Before diving into architecture, it helps to map where AI intersects with the textile manufacturing chain and what specific value it delivers at each stage. Not every stage needs deep learning. Not every problem needs a generative model. The engineering discipline is in choosing correctly.

# Fabric Defect Detection and Quality Control

This is the most mature and commercially validated application of AI in the textile industry, and the clearest example of quality inspection AI delivering measurable results on the factory floor. High resolution line scan cameras positioned above moving fabric capture images at speeds up to 60 meters per minute. These AI in production cameras setups feed directly into computer vision textile models, typically object detection architectures like YOLO variants, Faster R CNN, or instance segmentation networks, which analyze frames in real time to identify defects including misweaves, broken yarns, stains, holes, color bleeding, and pattern misalignment.

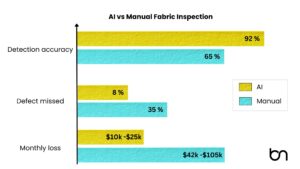

The numbers make the case. Manual textile inspection achieves 60 to 70% accuracy, with human inspectors missing 20 to 30% of defects due to fatigue and perceptual variation. AI fabric inspection systems like WiseEye detect over 40 types of fabric defects with accuracy exceeding 90%. The fabric defect detection AI market reached $523 million in 2024 and is projected to hit $6.5 billion by 2033, growing at 33.8% CAGR, making it one of the fastest growing segments in manufacturing AI.

But implementation is not as simple as pointing a camera at fabric. Deploying AI in cameras on a production line requires careful systems engineering. Production grade AI quality control textile systems need controlled lighting rigs calibrated to fabric type, training datasets that account for legitimate material variation versus actual defects, edge deployment on platforms like NVIDIA Jetson for real time inference with sub 100ms latency, and integration with factory MES systems for automated downstream actions like flagging, regrading, or halting the line.

Key engineering tradeoff: Higher detection sensitivity catches more defects but increases false positives, flagging natural yarn variation as defects. Tuning this threshold is fabric specific and requires textile domain expertise, not just ML expertise.

# Color Matching and Dye Process Optimization

Color consistency across textile batches is a perennial challenge. Subtle variations in tone, brightness, and saturation cause batch to batch inconsistency that is unacceptable in brand apparel. Traditional color matching relied on human inspection and manual dye formula adjustment, a process that was slow and error prone.

AI based color matching systems use high resolution AI in production cameras under controlled lighting to capture fabric samples. Computer vision evaluates key color attributes against target specifications. Artificial neural networks trained on extensive datasets predict how dyes behave under different conditions such as fabric type, temperature, pH, and application method, then suggest optimal dye formulations. Machine learning models also optimize water, energy, and chemical usage in dyeing processes, directly supporting sustainability mandates. This kind of AI in textile production is not speculative. It is operational in mills across China, India, and Turkey today.

# Digital Textile Printing: From Design to Print Ready Output

The digital textile printing AI market reached $7.63 billion in 2026 and is projected to grow to $22.46 billion by 2035 at 12.75% CAGR. Digital printing now accounts for 38% of global printed textile volume, up from 24% five years ago. AI enhances this pipeline at multiple points: generative design tools create patterns from text prompts, AI optimizes color separation and ink deposition, and computer vision validates print quality in real time.

But one of the most technically demanding and commercially valuable applications of textile AI in digital printing is not about printing at all. It is about creating the print ready digital master surface from a physical fabric scan. This is where most operations hit the wall, and where we spent considerable engineering effort solving the problem for denim specifically.

The Denim Problem: Why Fabric Texture Engineering Is Harder Than Anyone Expects

Consider this workflow, common in any denim operation exploring digital sampling textile workflows: you scan a 5 cm by 5 cm swatch of indigo twill at 600 DPI. Clean sample, no defects. Now you need a seamless 1.5 meter by 5 meter digital surface at the same resolution, production ready, print ready, brand approval ready.

That is 4.18 billion pixels. A single uncompressed master file at 16 bit color depth exceeds 24 GB.

If your response is “use a super resolution model” or “tile it with a GAN,” you are about to hit a wall that costs three months and a mid six figure budget. Here is why.

#Denim Is Not an Image. It Is a Woven Structure.

Denim is a warp faced twill, typically 3/1 or 2/1, with indigo dyed warp yarns interlacing over undyed weft yarns in a diagonal ridge structure. That structure is inherently anisotropic: it behaves differently along warp versus weft. Slub yarns introduce irregular thickness variations. Indigo oxidation creates non uniform dye gradients. Micro fiber noise adds physical complexity that is real, not decorative.

Visual authenticity of AI denim texture depends on maintaining all of these simultaneously: twill diagonal continuity across the entire surface, correct warp to weft frequency ratios, natural slub distribution (typically every 8 to 15 mm along warp, with 12 to 20% intensity variance), fiber level micro noise aligned to yarn direction, and non repeating dye variation at low spatial frequencies.

Standard computer vision approaches treat texture as a 2D signal. Denim requires reasoning about a 3D woven lattice projected into 2D. Generic texture generators optimize for visual plausibility, but denim manufacturing requires structural correctness. These are fundamentally different objectives.

#Why Every Off the Shelf Approach Fails

Interpolation based upscaling enlarges pixels but introduces no structural variation. Twill ridges blur. Slub events stretch unnaturally. Tonal drift is absent. The result looks digitally smooth, the opposite of denim.

GAN based texture generation produces plausible patches but introduces uncontrolled weave irregularities, twill angle drift, and artificial periodicity within the first 50 to 100 cm. The output is non deterministic and non reproducible, unacceptable for production workflows where repeatability is mandatory.

Naive tiling creates visible repetition patterns detectable by any trained textile eye. A 5 cm tile repeated 30 times across a leg panel produces rhythmic visual artifacts that trigger immediate rejection in brand approval.

How We Solved It: Architecture of a Computational Textile Reconstruction Engine

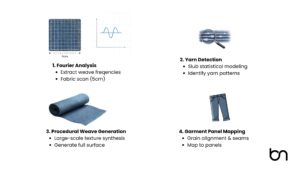

The correct framing is not “how do we make this image bigger” but “how do we reconstruct the underlying woven structure at a larger scale.” The scanned swatch is evidence of a material, not a picture to enlarge. This reframing changes every architectural decision. Our computational textile reconstruction engine works in four stages.

Stage 1: Structural Fingerprinting via Fourier Domain Analysis

The pipeline begins by extracting the weave’s structural DNA. Fourier domain analysis identifies dominant warp and weft frequencies, the precise spatial periodicity of the yarn grid. Directional filtering isolates the twill diagonal vector. Orientation tensors quantify the strength and direction of anisotropy. The output is not a visual description; it is a parametric specification of the weave geometry: ridge spacing, interlacement angle, frequency ratios, and directional bias.

Stage 2: Yarn Detection and Statistical Slub Modeling

Edge enhanced filtering isolates individual warp ridges. Ridge tracking algorithms detect yarn centerlines and compute spacing distributions. Slub events, the irregular thickness variations that give denim its characteristic hand feel, are identified as amplitude anomalies along warp lines. Their spacing, length, and intensity are captured as statistical distributions, not fixed patterns. This is critical: deterministic slub placement creates detectable repetition, while distribution based stochastic placement creates natural variation.

Stage 3: Procedural Re Weaving at Target Scale

With the structural fingerprint extracted, a generative woven lattice is synthesized at target dimensions (1.5 m by 5 m at 600 DPI). Warp and weft fields are generated from learned spacing distributions. A twill interlacement mask defines the over under pattern. Slub events are inserted stochastically. Micro fiber noise aligned to yarn direction is layered. Low frequency dye variation is synthesized independently to prevent the flat, uniform look that betrays digital origin.

The result: a physically plausible large area fabric surface with zero tiling artifacts, no detectable boundary seams, and no visible periodicity. This is validated against physical reference rolls using textile specific fidelity metrics, not generic image quality scores like SSIM or PSNR.

Stage 4: Geometry Aware Garment Panel Mapping

A front leg panel is not a rectangle. Neither is a yoke, a waistband, or a pocket. Each garment panel has a specific non uniform shape with a defined grain direction. Mapping the synthesized master texture onto these panels requires anisotropic warping that preserves warp spacing, maintains thread frequency across curvature, and enforces seam boundary alignment between adjacent panels.

Uniform scaling fails because denim warp and weft respond differently to directional stretching. Thread thickness distorts. Twill angle shifts. Slubs elongate. A geometry aware mapping engine uses finite element inspired local coordinate frames aligned to grain direction. Pre assembly visualization then simulates the stitched garment for validation; seam continuity is verified computationally before any print job is submitted.

Alternative Synthesis Approaches

Patch field optimization selects and places original scan patches using graph based energy minimization to suppress boundary discontinuity, twill phase mismatch, and long range periodic correlation. Gradient domain blending removes seam edges without destroying thread definition. This approach preserves original pixel authenticity at 3 to 5 times higher computation cost.

Material field decomposition separates texture into interpretable layers: twill carrier signal, slub event map, micro fiber noise, and dye gradient. Each layer is modeled independently. Synthesis samples from these distributions while enforcing orientation consistency, preventing repetition through statistical independence.

ROI: The Math Behind Investing in Textile AI

The value equation differs by application, but the framework is consistent.

For defect detection: A mid size mill running 200,000 meters of fabric per month with a 3% defect rate and manual inspection catching 65% of defects is shipping roughly 21,000 meters of defective fabric per month. At an average claim cost of $2 to $5 per meter, that is $42,000 to $105,000 in monthly quality losses. A fabric defect detection AI system catching 92% of defects reduces escaped defects by 77%, saving $32,000 to $81,000 monthly against a system cost that typically pays back in 4 to 8 months.

For digital denim texture engineering: A denim operation processing 40+ styles per season runs 4 to 8 physical strike off rounds per style at $800 to $2,500 each. Reducing strike offs from 6 to 8 rounds to 2 to 3 across 45 styles saves $72,000 to $247,500 per season in direct digital sampling textile costs. Add 40% cycle time compression, 60%+ reduction in rejected print waste, and faster brand approval, and the system typically pays for itself within two collections.

The less visible but compounding gain is design iteration speed. When digital texture is structurally trustworthy, creative teams iterate in hours instead of weeks. That velocity advantage multiplies across every collection, every season.

Case Vignette: A Mid Size Denim Mill’s Digital Transformation

[Anonymized composite based on operational patterns across multiple engagements]

A denim manufacturer producing 45 fabric styles per season was running 6 to 8 physical strike off rounds per style before achieving brand approval. Each round involved loom setup, dyeing, physical sampling, international shipping, and a 5 to 7 day review cycle. Their initial attempt at digital sampling textile workflows using a commercial texture scaling tool produced surfaces that passed internal review but failed brand approval. Buyers flagged visible repetition on leg panels and grain misalignment at yoke seams. Rejected prints accounted for 18% of first round submissions, each rejection costing $1,200 to $1,800 per style in re render and reprint cycles.

After deploying a structure aware computational textile reconstruction engine, the team digitized baseline swatches and synthesized full roll scale masters in under 48 hours per style. Panel mapping with seam continuity validation eliminated grain misalignment rejections in the first season. Physical strike offs dropped to 2 to 3 rounds per style. Sampling cycle time compressed by 40%. Rejected print waste fell by over 60%. Seasonal direct savings exceeded $150,000, with the system paying for itself within two collections.

The system did not replace human review. It gave human reviewers a structurally accurate starting point instead of a visually approximate one.

What Separates a Proof of Concept from a Production System

The AI in textile manufacturing market will reach tens of billions within a decade. But market size is irrelevant if your specific implementation stays stuck in pilot. The gap between PoC and production is not model accuracy. It is engineering rigor: data pipelines that handle contaminated inputs gracefully, validation metrics built by people who understand both AI and textiles, GPU infrastructure sized for 4 billion pixel workloads, and human in the loop QC workflows that trust the system without blindly depending on it.

At Brainy Neurals, we are an AI development company that builds these systems end to end, not as prototypes, but as production grade engines with measurable quality metrics, predictable timelines, rigorous evaluation at every phase, and clear stakeholder communication from discovery through deployment. Our AI in camera expertise spans edge deployed inspection systems, high speed line scan integrations, and real time quality inspection pipelines across textile, manufacturing, and industrial environments. Security and governance are built in from day one, not bolted on at the end.

Book a discovery call to walk through your specific manufacturing bottleneck, whether that is fabric defect detection AI, digital sampling textile workflows, AI denim texture engineering, or the full pipeline. No pitch decks. An engineering conversation with people who understand both the AI and the textile.

Or request a technical audit of your current quality inspection or digital sampling workflow. We assess scan quality, model performance, pipeline gaps, and validation rigor, and deliver a prioritized roadmap with realistic timelines and clear ROI projections.