Quick answer

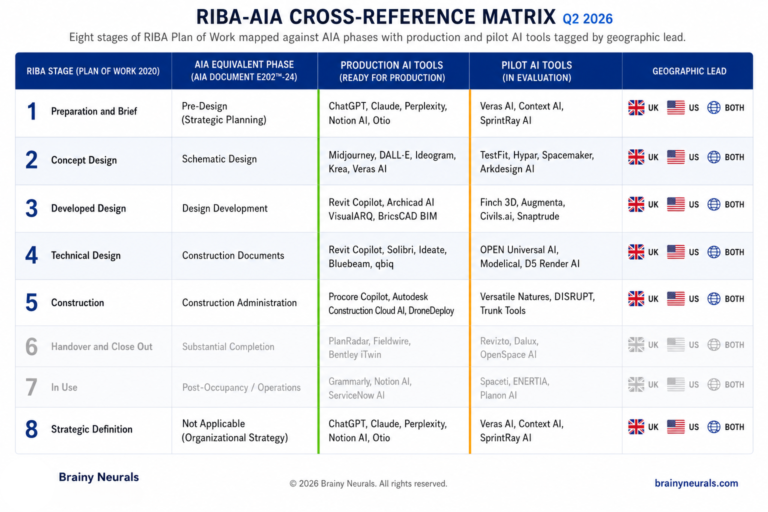

As of Q2 2026, AI is in production across RIBA Stages 0, 2, 3, 4 and 5 — and across AIA Pre-Design, SD, DD, CD and CA — but the depth differs sharply by stage. The heaviest production AI workload by architect-hour sits in Stages 3–4 (Design Development / Construction Documents), not Stage 2 (Schematic Design) where every conference demo lives. The UK leads on specification and code-compliance AI; the US leads on generative early-design AI.

The Contrarian Observation that Opens this Audit

Walk into any architecture conference in 2026 and you will watch a generative design demo. The presenter sketches a site boundary, presses a button, and 47 massing options appear on screen. The room applauds.

Walk into the office of any 200-person practice that has actually shipped AI to production, and the picture is the opposite. The biggest hour-volume deployments are in the unglamorous middle of the RIBA Plan of Work — spec drafting at Stage 4, drawing discrepancy review on the way to Construction Documents, code-compliance check before a Stage 5 tender. The conference circuit shows you Stage 2. The real production work is happening in Stages 3 and 4.

After mapping the full RIBA Plan of Work 2020 against the AIA phases — and tagging every AI deployment I could verify in production at named firms — the pattern is consistent. In 2026, the most extracted billable hours from AI in architecture come from the middle stages, not the front end. This audit walks every stage of both standards, names the AI tools actually shipping, and tags which side of the Atlantic is ahead.

What "AI in production" Means in Architectural Design

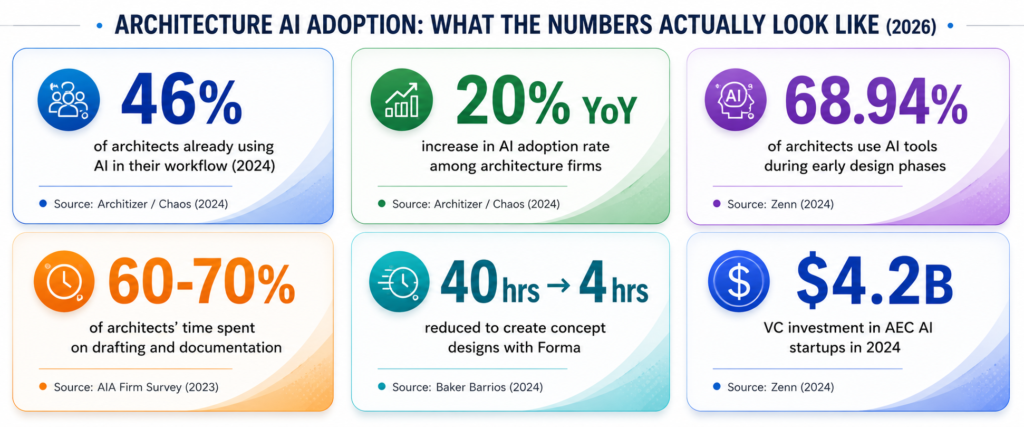

The 2025 Automation in Construction systematic review (161 papers, 2014–2024) found that 68.94% of AI usage in architecture sits in early design phases. That number is real, but it measures research papers — not architect-hours. By architect-hours saved, the picture flips: the 2023 AIA Firm Survey reported licensed professionals spend 60–70% of their time on repetitive technical drafting. The AI tools that compress that workload — spec drafting, drawing review, code compliance — produce the largest absolute time savings even if they appear less frequently in the academic literature.

For this audit, an AI use case is “in production” inside a design phase when:

- It is deployed at multiple Tier-1 architecture firms or large practices, named publicly.

- Output integrates with the design stack the team already uses (Revit, ArchiCAD, Rhino, NBS Chorus, BIM 360, ACC).

- There is a verified outcome — hours saved, error catch rate, or measurable rollout.

- It is in use across multiple projects, not a single pilot site.

Pilot, demo, and whitepaper status do not count. Neither does a vendor saying “AI-powered” without integration evidence.

The RIBA-AIA Crosswalk You Actually Need

The two standards do not map cleanly onto each other, which is part of why the AI conversation gets confused. Here is the working cross-reference:

The “Spatial Coordination” rename in RIBA 2020 (formerly “Developed Design”) is one of the more con‐

fusing terminology shifts and is criticised inside the UK profession itself. For AI tooling decisions, treat

RIBA Stage 3 as Design Development and you will not get lost.

The audit below walks each stage, lists what AI is doing in production, what is in pilot, and which side of the Atlantic is leading.

Stage 0 + 1 — Pre-Design / AI in Strategic Definition and Briefing

In production: AI-powered site analysis is the dominant deployment. Autodesk Forma Site Design — built on the Spacemaker acquisition — runs AI environmental analyses on sun hours, wind comfort, microclimate, and noise impact. Stantec used Forma Site Design on the Hamdan Bin Rashid Cancer Hospital project to guide sustainability and patient-wellbeing decisions. Baker Barrios Architects reported compressing what was previously a 40-hour site analysis into four hours after scaling up Forma adoption. Across Forma Site Design, sun hours analysis was the most-used AI-driven feature of 2025 (Autodesk, January 2026).

For programming, large language models are now the default tool for stakeholder requirement consolidation and brief synthesis. Firms using ChatGPT or Claude for project-brief drafting report 60–70% reduction in specification drafting time on standard renovation projects (industry adoption data, 2026).

In pilot: Climate-resilience auto-briefing tools tied to local data feeds. California’s SB-1000 mandates AI-assisted climate resilience reviews for public projects starting in 2026 — that regulation is forcing the pilot-to-production transition by jurisdiction.

Manual: Strategic vision, client relationship work, and the actual approval of the brief.

Forma Site Design, ChatGPT/Claude brief drafting

Climate-resilience auto-briefing (CA SB-1000)

Strategic vision, client relationship, brief approval

Geographic lead: The US is ahead on programming-tier AI adoption volume, driven by larger project budgets and TestFit-class tools that automate feasibility for multifamily, hospitality, and student housing. The UK is ahead on regulated-brief tooling — NBS Chorus integrates the brief into a structured specification framework from Stage 0, which the US lacks at the same standardisation depth.

Stage 2 — Schematic Design / Where the Conference Demos Live

In production: This is the most-talked-about and most-saturated stage for AI tooling. Autodesk Forma Building Design — launched April 22, 2026 with Arcadis as a named development collaborator — is now the schematic design module of the Forma industry cloud, with Revit integration as the first Forma Connected Client. TestFit dominates multifamily and hospitality feasibility studies in North America with real-time generative layouts including unit counts, parking ratios, and pro-forma metrics. Hypar runs production at Obayashi, Goldbeck, Arco Murray, and Adrianse for workplace, healthcare, and data centre typologies — with up to 90% reduction in commercial test-fit time. Higharc dominates the homebuilder segment. Finch demonstrated a Forma + Finch + Revit AI workflow at Autodesk University 2025 that takes Forma site data and generates compliant Revit-native floor plans.

In pilot: Full text-to-3D end-to-end generation. Generative facade design at production fidelity. Maket.ai’s v2 release (planned 2026) extends to zoning verification and HVAC planning — moving toward production but not yet there at Tier-1 scale.

Manual: Conceptual design intent. Narrative. Anything that requires a lead architect’s design judgement on a bespoke building.

Forma Building Design, TestFit, Hypar, Higharc, Finch

Full text-to-3D, generative facade, Maket.ai v2

Conceptual intent, narrative, bespoke judgement

Geographic lead: US is decisively ahead. TestFit’s enterprise traction is North American multifamily-driven. Hypar’s named adopters are global but the bulk of production deployment evidence is US. The exception: Norway/Sweden — Spacemaker originated there, Finch originated there, and Scandinavian firms have run generative SD workflows longer than any US firm of equivalent size.

Stage 3 — Design Development / The Unsung Production Tier

This stage is where the conference circuit goes quiet and the production AI quietly compounds.

In production: Solibri runs across major BIM environments including Revit and analyses BIM data against predefined rules covering code compliance, discipline coordination, clash detection, and internal quality standards. NBS Chorus is the dominant UK specification platform, integrated with the RIBA Plan of Work; named adopters include Allford Hall Monaghan Morris (Chorus Premium) and Ryder Architecture (Bank House Newcastle). NBS Chorus turns specification development from a Stage 4 sequential task into a Stage 3 parallel workflow — and the UK profession has moved decisively into this pattern over the last 18 months.

For US firms, equivalent depth comes from PiAxis (AI-native Revit detailing automation) and D.TO / Design Together (BIM-native AI detailing with sustainability and assembly guidance). Both are growing fast in 2026 but neither has the named-adopter evidence base that NBS Chorus does in the UK.

In pilot: Full generative MEP routing. Closed-loop sustainability optimisation tied to embodied-carbon targets. The Building Safety Act in the UK and ISO 19650 revision (in public consultation March 2026) are driving compliance requirements that pilot tools have not yet ingested.

Manual: Complex coordination judgement, particularly where multiple design disciplines disagree on a contested element.

Solibri, NBS Chorus (UK), PiAxis, D.TO

Generative MEP, closed-loop sustainability

Complex coordination judgement

Geographic lead: UK is ahead by a clear margin. NBS Chorus has no US equivalent at the same standardisation depth, and the UK Building Safety Act compliance pressure has accelerated adoption faster than any US driver. The US is catching up on the BIM-native detailing side via PiAxis and D.TO, but not on the structured specification side.

Stage 4 — Construction Documents / Where AI Workloads Compound

This stage is the largest absolute consumer of architect-hours per the 2023 AIA Firm Survey, and the AI category that has matured fastest in 2025–2026.

In production: Blueprints AI generates permit-ready construction documents — floor plans, site plans, sections, elevations, details, title sheets — compliant with the International Building Code (IBC), ADA, and local zoning codes across all 50 US states. The platform was trained on more than 6 million data points and licensed architects retain final review and approval authority. mbue.ai positions as “autocorrect for construction documents” — instant AI review of architectural drawings. InspectMind runs AI plan check and drawing review starting at $50–$100 per project, finding coordination issues, code conflicts, and constructability problems in hours rather than weeks. UpCodes Copilot — recently upgraded with project memory across sessions — is the dominant US code-compliance assistant for IBC and state-specific code research. Avoice (covered in ArchDaily, February 2026) is the emerging AI-powered technical documentation organiser for accumulated firm knowledge.

For drawing discrepancy in CDs — where two drawings in a set contradict each other and the contradiction propagates downstream into RFIs and change orders — the AI tooling has quietly become the most ROI-positive single deployment in 2026.

In pilot: Auto-generated CD sets at full Tier-1 firm fidelity. Most CD generation tools work well for repeatable typologies (multifamily residential, commercial fit-outs, single-family residential) but struggle on bespoke or heritage projects.

Manual: Final detail design on complex systems. Anything where the architectural judgement carries a liability tail and cannot be delegated to an AI output.

Blueprints AI, mbue, InspectMind, UpCodes Copilot, Avoice

Auto-generated bespoke CD sets

Complex detail design, liability-carrying judgement

Geographic lead: US is ahead on CD-generation AI; UK is ahead on spec-side automation (NBS Chorus running parallel to drawing CDs from Stage 3). The two markets are running different races: the US is automating drawing production; the UK is automating specification production. By 2027, the integration of both will be the next frontier.

Stage 5 — Bidding and Construction Administration

In production: AI takeoff (Togal.AI — 76% faster than the legacy On-Screen Takeoff per peer-reviewed University of Kansas study; Kreo); AI scheduling (ALICE Technologies, nPlan); AI submittal review (Trunk Tools — $40M Series B in August 2025; Procore Copilot at AMLI Residential; Fresco AI); AI permitting (PermitFlow — $54M Series B, 12M+ permitting data points). CV-based progress tracking from OpenSpace and Buildots feeds back into the architect’s coordination loop on active projects.

In pilot: AI bid-strategy and win-rate prediction at portfolio scale.

Geographic lead: US is ahead on construction-side AI adoption volume. UK contractors are catching up but the named-adopter evidence base is dominated by US firms (Suffolk, Bechtel, Skanska, Turner) and a handful of UK names (Sir Robert McAlpine, Vinci UK).

Stage 6 + 7 — Out of Scope for This Audit

This audit explicitly does not cover post-occupancy AI, facilities-management AI, or proptech beyond Stage 7 — those are separate categories with separate vendor ecosystems and separate buyer journeys.

The boundary matters because conflating them is one of the most common analytical errors I see in 2026 design-tech coverage.

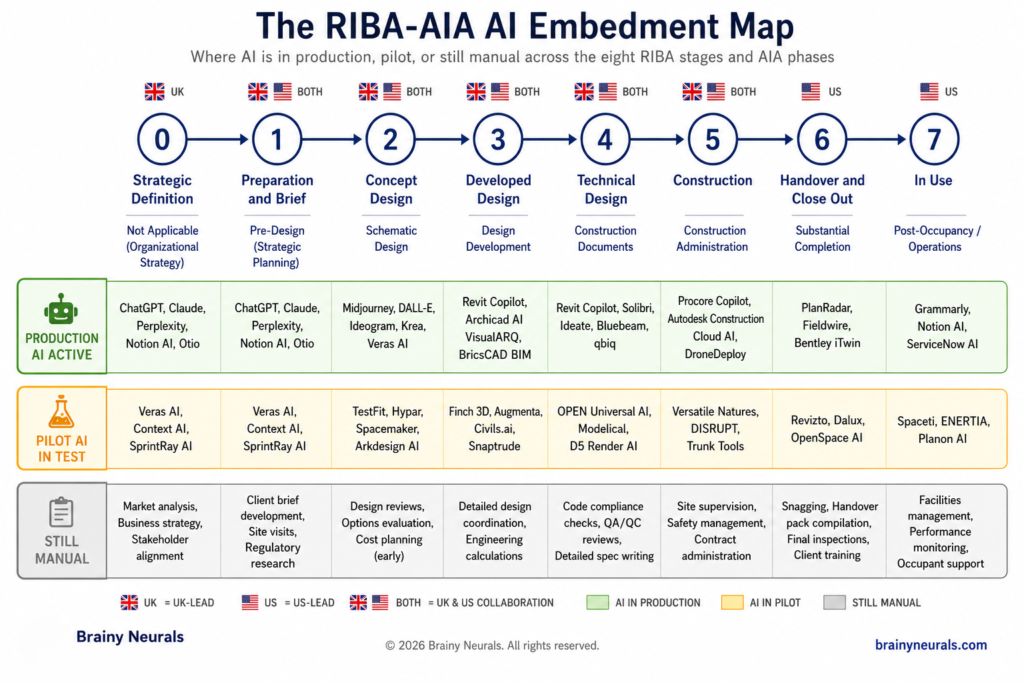

The conference circuit shows you Stage 2 / Schematic Design. The biggest hour-volume production deployments are in Stages 3 and 4 — spec drafting, drawing discrepancy review, code compliance check. That gap is where most 2026 AI design budgets are being misallocated.

In pilot: AI bid-strategy and win-rate prediction at portfolio scale.

Geographic lead: US is ahead on construction-side AI adoption volume. UK contractors are catching up but the named-adopter evidence base is dominated by US firms (Suffolk, Bechtel, Skanska, Turner) and a handful of UK names (Sir Robert McAlpine, Vinci UK).

The RIBA-AIA AI Embedment Map

The cross-reference matrix below is the proprietary asset of this audit. Use it before you fund any 2026 design-AI investment to know whether the use case is production-ready, pilot-stage, or still manual.

What Mitesh has seen across BN's design-AI deployments

“On a recent engagement with an engineering practice that owns the construction-document phase across multiple regional offices, we shipped AI-powered drawing analysis that compressed plan approvals from three weeks to four days — a 70% reduction in cycle time. The wedge was not the model. The wedge was that the AI’s output landed inside the team’s existing drawing review workflow without forcing a new dashboard. That integration discipline is what separates Stage 4 AI deployments that ship from those that don’t.” — Mitesh Patel, NVIDIA Certified AI Architect, Founder & Director, Brainy Neurals.

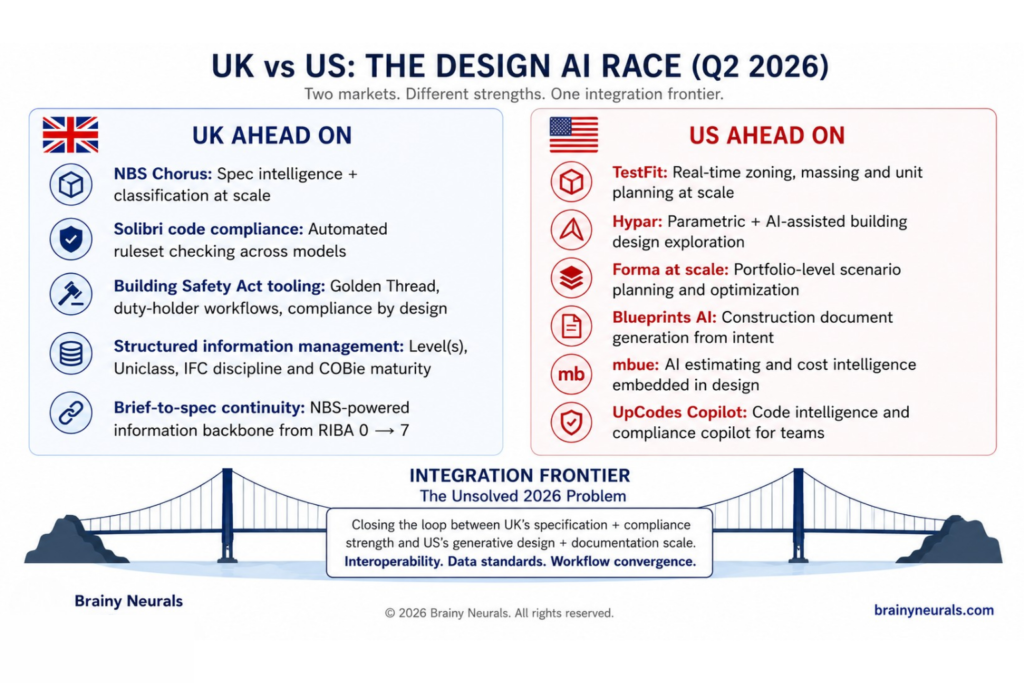

The Geographic Split — Why It Matters for Your 2026 Software Stack

The UK and US are running two different AI races inside the design phases, and most firms operating across both jurisdictions have not registered this yet.

The UK is ahead on: Specification AI (NBS Chorus integrated into the RIBA Plan of Work from Stage 3); Code-compliance and Building Safety Act tooling (driven by the regulatory pressure of the post-Grenfell reform); Structured information management discipline (ISO 19650 baseline); Brief-to-spec continuity through a single platform.

The US is ahead on: Generative early-design AI (TestFit, Hypar, Forma at scale; Finch traction at large firms); Construction-document automation (Blueprints AI, mbue, InspectMind, PiAxis); Code-compliance assistants for IBC (UpCodes Copilot); Volume of named-adopter case studies across enterprise AEC.

For a firm operating across both markets — Foster + Partners, KPF, HOK, Stantec, Arcadis, Gensler, AECOM — the practical implication is that the AI software stack should not be uniform. The UK office should lead on NBS Chorus + Solibri + Forma. The US office should lead on TestFit/Hypar + UpCodes + Blueprints AI. The integration layer between them is the unsolved problem and the next frontier.

Most architects have not registered that the UK and US are running two different AI races inside the design phases. The UK is automating specifications. The US is automating drawings. By 2028, the integration of both will be the production frontier — and most firms are not architecting their stack to reach it.

A confession about how AI specifications quietly fail at Stage 4

Across the AI specifications we have rewritten mid-project — and we are at 40+ rewrites at this point — the failure pattern at Stage 4 / Construction Documents is always the same. The vendor’s AI works in their demo. It works on the firm’s pilot project. Then it lands inside the team’s actual drawing review cycle and falls apart, not because the model accuracy dropped but because the integration spec was never written for the messy reality of mid-project change orders, drawing revisions out of sequence, and the partner-signed drawing that contradicts the consultant-signed drawing. The fix is rarely better fine-tuning. The fix is rewriting the spec until the AI’s output appears inside the existing drawing review workflow without asking the team to log into a third dashboard. That is the unglamorous work that decides whether the Stage 4 AI deployment ships.

When This Map Breaks Down — Failure Modes Worth Naming

This audit applies cleanly to firms above 50 employees with established BIM workflow on Revit or ArchiCAD running standard commercial, multifamily, healthcare, or workplace typologies. Four conditions break the conclusions.

Bespoke architecture

Generative design tools (TestFit, Hypar, Forma) work on typologies that have repeatable building logic. They do not work on the Frank Gehry, Zaha Hadid, or Heatherwick category of bespoke architectural intent. For those firms, AI sits primarily in DD/CD documentation, not in concept generation.

Conservation and heritage projects

RIBA’s Conservation Overlay to the Plan of Work is explicit about the survey-and-specialist requirements for heritage assets. Most production AI tools do not yet ingest heritage-specific constraints or specialist subcontractor handoffs. Heritage projects in the UK in particular run a different AI stack — or no AI stack at all on the design side.

Joint ventures with mismatched IT governance

The largest single cause of stalled Stage 3–4 AI deployments in 2025 was a JV partner refusing to share the BIM data the AI needed. The data-rights test fails before the model is even chosen. If you operate JVs across UK/US/EU, build the data-sharing protocol before you fund the AI tool.

Mid-size firms without BIM maturity

Most production AI tools assume Revit or ArchiCAD as a baseline. Firms still running on AutoCAD or paper-PDF workflows for parts of Stage 3–4 cannot deploy the production tools above without a BIM migration first. The honest sequencing is: migrate to BIM, then deploy AI. Reversing that order produces failed pilots.

What the Numbers Actually Look Like

46%

Architects already using AI tools in their projects

+20%

Year-over-year adoption increase — 2024 to 2025

68.94%

Of AI usage in early design — 161 papers, 2014–2024

60–70%

Of architect time on repetitive technical drafting

40hr → 4hr

Baker Barrios site analysis after Forma adoption

$4.2B

VC in AEC AI startups in 2024 — up from $1.8B in 2022

Sources: Architizer + Chaos 2024/25 survey (n=1,227); Automation in Construction systematic review; 2023 AIA Firm Survey; Baker Barrios Architects; industry VC data. Verify at source before citing in board materials.

Frequently Asked Questions

What this Means for Your 2026 Design-Tech Roadmap

The map above is a snapshot of where AI sits in architectural design in Q2 2026. By Q1 2027 several of

the pilot tools will have graduated, the Forma + Finch + Revit workflow will be standard at large practices,

and the Building Safety Act compliance floor in the UK will be live. The architectural decision waiting on

your desk this quarter is not which AI tool to buy. It is whether your software stack is geographically

calibrated to the AI race your firm actually competes in.

If you run a practice above 100 architects:

Fund & Scale Immediately

Production-Ready Now

- Forma Site Design + Building Design (Stage 0–2)

- TestFit or Hypar for your primary typologies (Stage 2)

- NBS Chorus if you have a UK office (Stage 3–4)

- Solibri as the Stage 3 verification spine

- UpCodes Copilot as Stage 4 code-compliance baseline if US office

12–18 Months from Default

- Blueprints AI and mbue for CD generation

- PiAxis or D.TO for AI-native Revit detailing

- Avoice for technical documentation organisation

Skip Until 2028+

Not Production-Ready

- Full text-to-BIM end-to-end generation

- Fully agentic AI design partners

- Generative MEP at production fidelity for bespoke buildings

For a generative schematic design deep-dive at the level of architect-hours per week, see the cluster post (T-005). For the build vs buy decision on whether to license a vendor or develop firm-specific tooling, see T-039.

Before you standardize an AI design stack, run it through the geographic-calibration test.

Get the AI Readiness Assessment — 25 questions to map where your firm sits across the eight RIBA stages, with a personalized scorecard and the three highest-leverage moves for your firm size, geography, and BIM maturity.